TFS As Your Build/CI Server: Only Positive Takeaways 1 of 2

This post is a grab bag of information, techniques, and landmines I wish someone had told me when we first set out to run our build/deploy on top of TFS. What follows is a short, all-positive compilation of everything I’ve learned about TFS 2010. I’ll assume you work with TFS on a daily basis, and thus won’t attempt to explain TFS concepts (shelving, for example).

All positive

All-positive means that I will not complain about TFS. I will not. I’ll only provide helpful workarounds I’ve found.

Mini-review of TFS’s continuous integration featureset

Between TeamCity and TFS, having used both in two environments and having recently used the newest versions of both, I’d prefer TeamCity in a landslide. I can fire off out a bulleted list of specific ways TeamCity is better, but in the interest of staying positive, let’s just move on.

Tips for working with TFS source control

Merging

If in doubt, don’t automerge. If you are having problems with TFS merges, you can solve all your problems by manually merging every file. TFS 2005 was notoriously bad at auto-merging (i.e. performing server-side merges), so the only way to win, was not to play.

In TFS 2010, I will 99% certify that automerging works. TFS 2010 has improved, and our team has had almost perfect success with automerging, though there are hiccups here and there. We’ve had merging issues, but I’m not convinced our merging issues are automerge issues. 99% is pretty good. Let me know in the comments if you can definitely blame TFS 2010 automerge for a botched merge.

Replace your client-side merge tool with one you can trust. The built-in VS2010 merge/compare tool works. However, I had “an incident.” “Incidents” are bad when merging. What happened is, the VS merge tool mistakenly matched up two entirely different methods and attempted to “merge” the changes. Merging the contents of method A() into method B() is bad. It’s bad enough to go looking for a replacement. So, following these instructions, I replaced the built-in merge tool with Perforce’s free P4Merge.

Weird merge conflicts with renamed files? Accept the lesser victory and step a) delete, then step b) re-create any files that cause weird merge issues. This breaks the file’s version history, but solves your bigger problem.

Workspace tips

I know lots of you have problems with TFS and how it deals with files. I don’t. I don’t know if I’m just not exercising the tool enough, but I’m not having problems, now that I know what not to do. Specific advice follows:

Don’t go offline. The consequences can be worse than you think. I’ve never had success with offline mode, and what’s worse, until you go back online, the Team Explorer hides your TFS server from the list. I’ve had something of a traumatic experience with offline mode, so it’s hard to stay positive or even fake sound positive when describing offline mode. Just don’t go offline unless you know how to get back online. For the record, I’m using the newest of the new with TFS 2010 and Visual Studio 2010, and even so, even with the newest tools I’m experiencing problems. I’ll give a blanket recommendation that you don’t try it.

Do as much editing as possible inside Visual Studio Solutions or Projects. It’s easier to create, edit, move, and delete files inside Solutions or Projects (files inside Solutions are automatically tracked in TFS). Treat Solutions ands Projects as a rail rides: stay in the cart travelling slowly down the rails. Do not exit the cart. In case of fire, follow emergency procedures (spelled out further below).

This goes against advice I’ve heard. I’ve heard that TFS source control is more manageable from the command-line than via Visual Studio. But for me, I prefer to let Visual Studio’s integration automatically check out files for editing. So, whenever possible I work underneath the protective umbrella of Solutions and Projects.

Remember TFS does not automatically track file changes, not even partially like SVN or git. This means:

Explicitly rename or move files in Source Control Explorer.

Explicitly delete files from Source Control Explorer.

Explicitly add files from Source Control Explorer.

Check out files in Source Control Explorer to edit. Or, reworded:

Be sure to check out first, before editing files outside of Visual Studio. This means when running any kind of code generation, generating assembly info files, or even something as simple as editing PowerShell scripts with the ISE—in all cases, be sure to check out first. Then edit. Last, check in (or undo).

If you don’t follow these steps in order, you’ll experience bad things. Notably, if you try to check out after successfully editing a file, you’re presented with a merge conflict.

If something gets weird, destroy your entire folder (or entire Workspace) and get latest+force override. Don’t try to get too specific and get one or two files. Delete the whole folder, then get latest+force override. It’s quick, just do it.

There’s no good way to temporarily edit a file (e.g. temporarily change the connection string in your app.config) without triggering a checkout. If you ever need to temporarily edit a file but don’t ever want to check in the change, well…there’s really no good way to go about it. In fact there are several not-good ways to go about it:

- Just check out the file and edit it, and simply remember to undo your changes later.

- Cheat. Open the file via Windows Explorer, and unset its Read-Only flag. And, when you want the file to go back to its original state, simply remember to get the latest version of the file + force override.

- Cheat. Open a prompt at the root of your workspace and run attrib -r *.* /s. This is the nuclear option, as TFS will now assume you’ve edited every single file in your workspace, and will treat any updates as merge conflicts. Don’t do this. I’ve done it so I can tell you not to try it.

Shelvesets work. Trust them. Use them. Use them frequently. Make as many of them as you want. Give them dumb names if they contain trash (I have shelvesets named “aaaaaaa” and “aaaaaaaaaaaa”, and of course, one named “help”). You can find them later, just sort by date. Easy.

Always shelve from Source Control Explorer to keep things simple. If you Shelve a Solution, you may miss files. I’ve missed files when I shelved a Solution. Don’t be me.

MSTest tips (specifically: using MSTest for your unit and integration tests)

Switching from NUnit is a cinch. All the attribute names are different, but only slightly. In With the exception of one MSTest feature:

Learn about localtestrun.config and how it works. We’ve started using it, and while it’s convenient, it’s essentially a non-composable* way to copy files you need for your tests.

* i.e. once you start using localtestrun.config, you can’t just switch to NUnit or XUnit without some pain. Alternately, if you had coded up manual file copying, you wouldn’t have any issues converting to or from NUnit/XUnit.Also, the localtestrun.config may be responsible for our extremely slow test runs.

The test runner is excellent…and slow. It’s not entirely the test runner’s fault, as the Resharper test runner is equally slow (I’ve tried). I gave up using Resharper’s test runner when I found out it was just as slow, and has imperfect (broken) localtestrun.config support. Note, tests are slower by a large factor if you’re running code coverage.

If not for the slowness and the occasional bug and some wonky behavior during debug sessions, I’d say the VS test runner is as good as Resharper’s test runner or TestDriven.NET. Short note about TestDriven.NET: like VirtualBox, it’s not free for corporate use. Read the EULA.

IntelliTrace sounds nice, but crashes test runs. We turned it off so it stopped crashing our test runs.

Learn the keystrokes for running tests. CTRL+R, t. CTRL+R, a. Similar key chords to run tests with the debugger attached. If you forget the keystrokes, go to the Test->Run menu and they’re listed there. Just memorize them.

Ignore most of the Visual Studio testing features. They do not help you write unit or integration tests. Specifically:

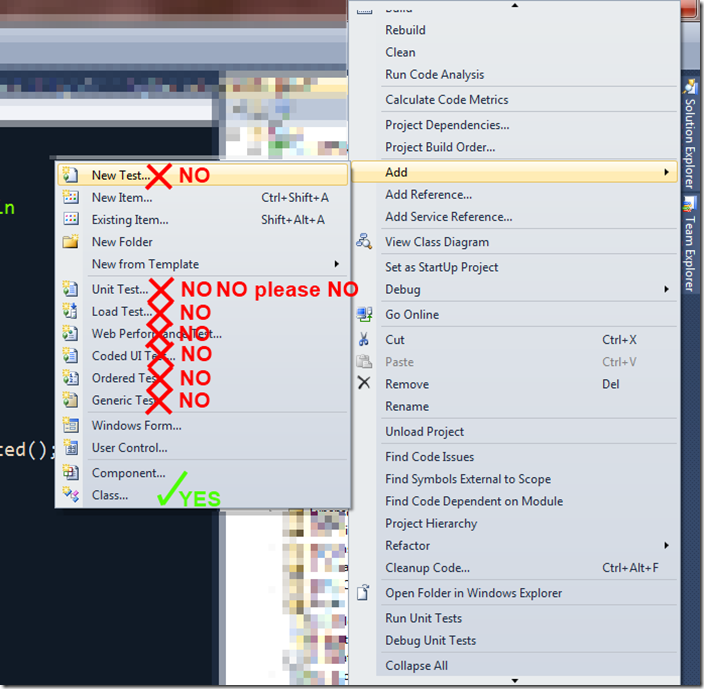

- All “Test”s available from the right-click->Add menu are a bad idea. Add a “Class” instead. Diagram:

- Create Unit Test (“Unit Test…” as seen in the screenshot above) in particular will only mislead you. The other tests (e.g. Coded UI Tests) are useful in other contexts, but I can’t think of any situation for which the “Create Unit Test” dialog is useful.

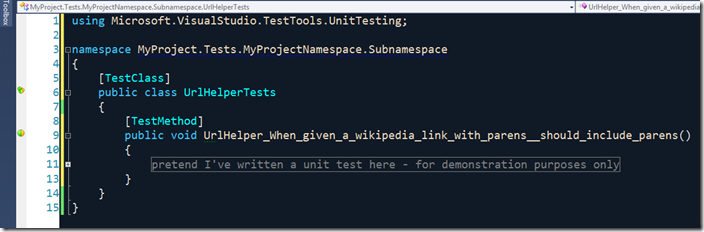

Start from an empty class when writing new unit tests. While the “Basic Unit Test” template works (and is an excellent tool to help you learn the MSTest attributes), a clean file is better. Apply YAGNI. You don’t need a TestInitialize or ClassInitialize method yet, so don’t add them. You can add either of them later, if you need them. For now, leave them out. YAGNI. This is what one of my new test classes look like:

*note: I am using this naming convention presently. It’s not so bad. We add the class name prefix to the method name (UrlHelper_) so test results can be sorted and understood and so there aren’t hundreds of “When_etc” names in a row. And yes, I’m aware that you can add columns, specifically the test class’s full name, to the test results display, but it’s not a first-class citizen and doesn’t help when running tests in the build. Stay on the rails and just embed the class name in your test method. Side note: sometimes I need to split out my test classes to support more than one test fixture (context) per class-under-test, and I do so. Read up on test fixtures and class-per-fixture if you’re intrigued as to why I’d want such a thing.

If you have a bad test portfolio (i.e. “our tests suck”), it’s not MSTest’s fault. Using NUnit, XUnit, MSpec, or any of the (literally 20 or so) .NET BDD frameworks will not help you if you don’t have the basics. MSTest is indeed limiting in some ways, but I’m far more limited by my coding/design knowledge than MSTest itself. With not much extra effort, you can be successful with MSTest. So, now that we’re not blaming MSTest, how do we improve our bad tests?

Learn about unit testing, integration testing, acceptance testing, ATDD, BDD, design by example, context/specification, behavior testing, UI testing, “subcutaneous” UI testing, functional testing, end-to-end tests, fast/slow tests, design tests, outside-in tests, mocks, stubs, fakes, doubles, what to test, what not to test, when to delete tests, when to apply DRY to your tests and when not, how much to maintain your tests, how to organize your tests, the misrepresentation of test fixtures as TestClasses, using automocking tools, using IoC with tests, using object mothers, using test builders. Dealing with threading. Using SQLite in-memory with your ORM to speed up your integration tests. I can’t tell you how to write your tests or why today. Everyone else (“the entire internet combined”) can.

Troubleshooting: if a test just flat-out isn’t running, find it in the test list (Test->Windows->Test List Editor) and ensure it is enabled. Disabled tests just don’t run. MSTest allows you to disable tests via the test lists view, presumably because … I don’t know why. But it can be done, and it’s really weird when someone does it and I can’t run a test and I don’t know why.

I don’t know what to do with the .vsmdi file either. Check it in and try to pretend it doesn’t exist. It stores such things as the mysterious “Test Is Enabled” flag, and details for any test lists you may have, and all of these wonderful things. If you accidentally break the vsmdi file after checking it in, use the power and magic of source control and revert changes.

Related: If you need to disable a test, use the [Ignore] attribute like every other framework. Don’t argue, just do it.

Related: I haven’t found a use for test lists. I’m applying YAGNI and ignoring them until I can figure out how to use them. Don’t use test lists unless you know why you should.

TFS as a continuous integration server

First, let me define build machine as the computer on which your TFS build agent runs. Bueno. Let’s get rolling.

Turn off code coverage? According to several blog posts (here’s one), if your build fails because “The process cannot access the file ‘data.coverage' because it is being used by another process.”, then you need to turn off code coverage.

On your build machine, restart the build agent every evening to prevent slowness caused by memory leaks. Don’t argue, just do it, particularly if you notice your builds slow down after a while. If you’re horrified by the thought of restarting services as a rule, you should look into the wealth of options IIS provides to restart unhealthy app pools. You’re not alone. According to Unix guys, It’s the Windows way. Give in and just run the following script as a Windows Scheduled Task every night:

REM BEGIN BATCH FILE SWEETNESS

REM –=-=-=-=-=-=-=-=-–=-=-=-=-=-=-=-=-–=-=-=-=-=-=-=-=-

net stop TFSBuildServiceHost

net start TFSBuildServiceHost

REM –=-=-=-=-=-=-=-=-–=-=-=-=-=-=-=-=-–=-=-=-=-=-=-=-=-

REM END BATCH FILE SWEETNESS

Run only one build agent at a time, per build machine if you’re running MSTest. If you have one build machine, one build. Two machines, two builds. One per machine. Why, you ask? MSTest aborts test runs if you run two MSTest runs simultaneously. I don’t know why. If you run NUnit or just skip unit tests altogether, you can run more simultaneous builds. But to avoid phantom build failures, don’t cheat and just run one build at a time.

Similarly, don’t log into or log out of the build machine while MSTest is running, as it will abort any running MSTest test run. Seriously. I have a theory as to why this is so, but it doesn’t really matter why. Just know that, if you’re running tests, don’t log in or out.

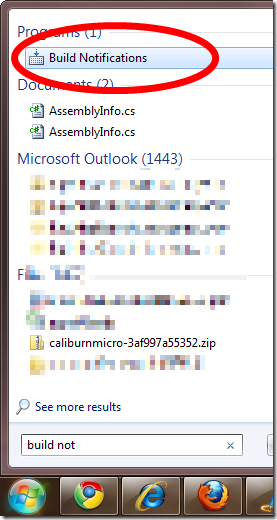

TFS has a tray app called “Build Notifications”. Use it. It works for notifications, which arrive within a few minutes of build completion. One caveat: unlike TeamCity, you are not notified when a test run begins to fail, but when the test run completes.

The tray app’s build status screen cannot be trusted to be accurate, so leave the tray app alone and just use Visual Studio/Team Explorer to look at your builds. In other words, use the TFS tray app only for the alerts.

Refresh doesn’t work on the build status screen in Visual Studio. It’s buggy and doesn’t properly refresh all the time, sometimes misplacing running or completed builds. To work around this behavior and truly refresh, close and reopen the window.

Work in progress – part 2 to come

Hello everybody! If I’m ignorant of something that would solve any of the problems I’ve experienced above (notably, speeding up test runs would be GREAT), let me know.

Assuming I get up the gumption, I’m also going to write a second post covering:

- 2 second template chooser workflow

- JFHCI, which has poisoned me against workflow foundation forever and which informs my … am I allowed to use the word philosophy when describing build systems? Let’s go with it: …informs my build system philosophy.

- Preferring a malleable (i.e., code-based, not XML or XAML) build script,

- Hardcoding developer configuration the smart way in my C# project, i.e. where it’s easiest to change

- Minimizing premature configuration and thus, minimizing web.config/app.config file sizes and the nightmare that is XML transforms

- However, using WF where it works: adding build “arguments” for things that you actually do change from build to build. E.g. changing the drop folder, turning off automated deployment to a dev environment.

- Jailbreak from XAML prison:

- Calling out to MSBuild

- Calling out to PowerShell

- Calling out to custom Activities in C# (and why)

Peter Seale's weblog

Peter Seale's weblog